Made to Measure

In the latest season of Orange Is the New Black, an eligibility test sends the inmates of Litchfield Penitentiary into a multiple-choice frenzy. High-scorers will supposedly land better paying jobs—no one knows what kind, although the $1-per-hour wage is incentive enough—but the prompts are mystifying. “True or false: Ideas are more important than real things,” goes one. “Agree or disagree: Most people are brave,” goes another.

Later, Danny Pearson, a representative of the private prison corporation administering the test (and the jobs), reveals that the questions didn’t matter at all. The test was a ruse, plucked from the Internet, and the company simply chose the winners at random. Pearson explains why: “My system is to make the ladies think that there is a system, so that they don’t hate us for not getting the job. They’re mad at themselves for not having what it takes.” When a stunned prison official sounds a cautionary note, Pearson bats it away: “We’ve had a lot of success with this model.”

This puckish satire reflects a growing phenomenon: American economic life is rife with obscure systems of scoring, reputation modeling, and threat and risk assessment, and transparency is hardly their defining feature. Wal-Mart and other retailers use inscrutable test questions to screen job applicants (Agree or disagree: “I sometimes doubt the practicality of what I am taught”). And thanks to the marriage of predictive analytics, big data, and Internet surveillance, assessment of our nation’s workers and buyers can occur all day long as a constant background hum—no forms or tests required.

These new assessment systems remain cloaked in secrecy, but they determine a lot: which ads we see, whether law enforcement views us as a threat, and how much we pay for certain goods.

“The fate of groups is bound up with the words that designate them,” Pierre Bourdieu once said. By that token, data brokers and purveyors of analytics software are prescribing some grim conditions for their subjects. In one workplace study, which tracked employee biometrics and behavioral patterns, workers were divided into groups like “Busy and Coping” and “Irritated and Unsettled.” Other data brokers specialize in classifying consumers into highly specific demographic units—“Rural and Barely Making It”; “Ethnic Second-City Strugglers”—that serve as coded descriptors for purchasing power and risk of liability.

But in this world, perception is reality. A bad ranking or consumer score, even if it’s unearned or based on faulty data, may consign me to a category I don’t technically belong to. It may also create what Frank Pasquale calls “cascading disadvantages” (think of a low-income borrower who can’t escape the debt cycle because he must borrow at extortionate rates to pay back the last lender).

Last month in The Nation, Jathan Sadowski and Baffler contributing editor Astra Taylor wrote about the malignant effects of big-data-driven assessments. According to the authors, even well-established scoring systems like FICO “have not eliminated bias, but rather enshrine socioeconomic disparities in a technical process.”

Some new services purport to help consumers escape from the time-honored credit score hustle but only end up sending them deeper in. Companies like Lenddo cater to the “underbanked”—those who did not have access to, or choose not to use, traditional financial services. If you don’t have a FICO score or don’t have a Citibank in your neighborhood, it’s not a problem, Lenddo says. Just download the Lenddo app, which connects to your social media accounts, asks you to complete a profile, and then assigns you a proprietary “LenddoScore,” ranging from 0 to 1000, based on “your character and your connections to your community.”

Lenddo won’t reveal exactly how its algorithms arrive at your score, but it offers a few tips for keeping your score in check: Connect with “your family members, coworkers, and your closest friends” on the app. And don’t be rude. “In most cases, your social data will not hurt your LenddoScore,” says the company—but if your social media accounts show “little or negative interactions,” there could be consequences. In the end, instead of saving a customer from the distant tyranny of FICO or Beacon (two common credit scores), Lenddo has simply devised another proprietary metric for consumers to anxiously chase.

As a first-time author, I make my customary appeals to our indifferent god—the fiendishly complex Amazon marketplace—which I’ve come to see the ultimate proving ground for these dynamics. The site’s coveted rankings mix definitive judgment with maddening opacity. An algorithm (and not a person at HarperCollins or Amazon, much less me) determines how much to charge for my book and when to adjust the price. A reputation score flashes like a billboard for every potential purchaser, thereby pushing scores ever lower or higher with the kind of recursion that is typical of automated scoring systems. Some authors, I’ve heard, juice their Amazon stats by asking friends and family to buy copies of their books at synchronized times.

As one biometrics researcher told the Financial Times, predictive tools can become prescriptive ones. This could be a mantra for our new age of metrics. Increasingly, we are not only measured; we bend our actions toward the things that measure us.

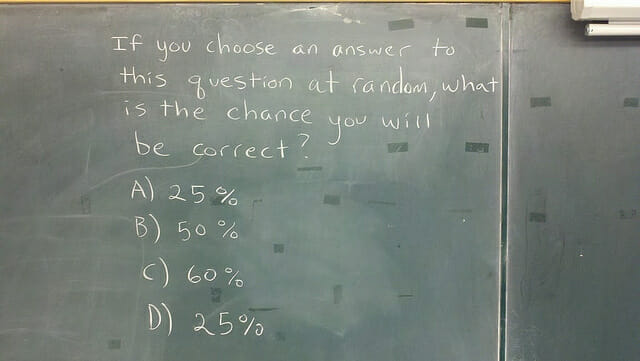

We know that the system is rigged, so we try to game it in return. But for our corporate masters, it doesn’t matter if we understand the rules. They only need us to keep playing.