What is it about social media that’s so depressing? I’m asking for myself. And I mean beyond the obvious: that much of the imagery and information presented there accelerates suffering and despair; that many people who use it are themselves plainly, often explicitly miserable; that it distracts more sneakily and time-warpingly than any other distraction; that it makes you feel as if everyone is hanging out without you, demands you masochistically crave this feeling, and incites you to inspire it in others; that it gamifies already competitive social relationships and posts your score for the disedification of the viewing public; that all this is by design and in service of a goal so banal that it sparks few to anger—they don’t care about our souls or our ruthless private-messaged gossip, just our data, where we shop and what we buy and how old we are when we buy it. Though I understand the sweeping consequences of a monopolistic data marketplace in nefarious partnership with the security state—the companies have pictures of our faces and robots that can identify them!—like many I find it difficult to care, at least to the extent that caring means changing my life. I mainly wish I could get it back.

This is how I feel—wish I could get my life back—but it isn’t the right way to put it, though it was fleetingly cathartic to type it out. Do I mean I want to return to the life I had when I was fourteen, before I began to worry about MySpace Top 8s or the messages I convey with my profile pictures? Absolutely not. Do I mean I want to, as the post-digital digital gurus have it, “reclaim my time”? No. But both nostalgia and denial are useful if you want to feel better, fleetingly, about—to use a meme I would like if it wasn’t a meme, “*gestures vaguely around at*”—everything.

A refrain among my peers and colleagues—“what might I be doing if I weren’t looking at Twitter all day?”—presupposes that deep down we’re not really like this, that there’s some substrate of reality beneath this manic and useless activity, a noumenal world in which we accomplish tasks or experience leisure without tabbing over to our curated roster of news and opinion every five minutes. But the fact is you can look at Twitter and Instagram and, if you’re desperate, Facebook continually, one thousand times a day, and still get things done—there is no separate, more real reality. In the two years since I went freelance, I’ve written three books and many long essays, had two serious relationships and an active social life, and traveled frequently, all while tweeting—as a rough estimate gleaned from the program I use that deletes all my posts that are older than ninety days—between two hundred and four hundred times per month. Of course, the books and the articles might not be as good as they could have been, but they are good—do not screenshot this and mock it—and anyway I often write about social media. Besides, procrastination expands to fill the space life provides; if I were writing to you from 1880 or 1930 or 1975, I probably would have spent all the time I used this week to collect retweets and passively monitor the online activities of people I’ve never met to instead pace or stare at the wall or flip through old photo albums or call my friends on the phone or whatever else it is they did to procrastinate before the flagitious rise of the gig economy. The differences are: 1) I work much more, statistically, than I would have in the past—partially because the lines between work and not-work are blurrier; 2) that work is much easier, because of the internet; and 3) now my various bosses can see what I’m doing and thinking when I’m not working. As can anyone else who happens to stumble upon my account. I sometimes meet people from Twitter who assume I’m unemployed, which makes me worry they assume I’m rich.

The Good, the Bad, and the Real

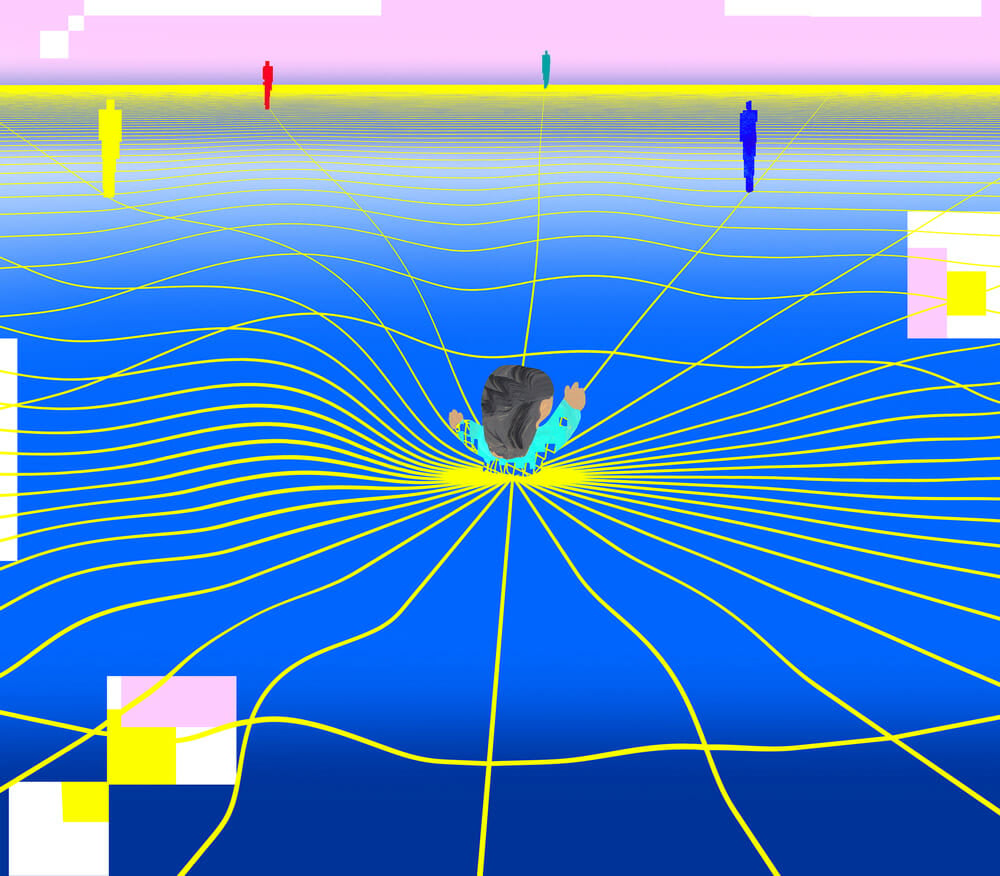

In 2011, the social media theorist Nathan Jurgenson coined the term “digital dualism” to describe the fallacy that there is a dividing line between the “virtual” world and the “real” world; today he runs an online magazine, Real Life, dedicated to examining the effects of the collapsed distinction. It’s funded by Snapchat. Irony is often confusing for people on Twitter, so let me be unambiguous: this is a post-internet strain of irony (its 180-degree inversion) so consistent with your most cynical expectation that its manifestation is actually surprising: Snapchat, the company perhaps most invested in merging the real and the virtual, pays for a website called Real Life. Even if Jurgenson’s message is correct, it hasn’t yet penetrated the emotional defenses of internet public opinion, which prefers to discount the anxiety and discomfort and real-life negative feeling that arise from one’s social media interactions as “fake”; all the better not to deal with what it means when a friendly acquaintance/colleague “likes” a tweet, posted by a woman you’ve never met, that says you should kill yourself. It works in the other direction, too: both parties would surely feel guilty if you killed yourself. (After all, children and teenagers do it more and more in reaction to “virtual” life.) Which is why the friendly acquaintance/colleague continues to greet you cheerfully in the street, as if you couldn’t possibly know she has done this or care; and it explains how the woman you’ve never met who thinks you should kill yourself will act when you eventually do meet her—she’ll be normal. What’s funny is that in this hypothetical scenario you’re not even famous, though an unknown number of people might be paying attention to you at any moment, which is what you thought you wanted. Why else would you keep publishing things, in public, for free?

There’s an argument to be made about social media as a force for political mobilization—or, say, making friends, whom I may speak to multiple times a week but see only two or three times a year, if ever; research shows shared hatreds are more binding than shared interests—but first I’d like to talk a little bit more about myself. When I wake up every morning I look at my phone to see what has transpired in the night, the final waking moment of which is usually the last time I looked at my phone. This is bad for my sleep cycle, I know, and for the nerves in my hands—I refuse to get one of those knobs you can put on the back of your phone to make it easier to hold, which I see as not just admitting I have a problem but resigning myself to it, as well as broadcasting to strangers who see me using my phone in public that I am a Phone Person (worse: a Phone Woman)—but more important, it is just bad. What I dislike about my life are not the facts of it but its texture, the false tension and paranoia and twitchiness. I exist in a state of “might always be checking something,” and along with being unpleasant, it’s embarrassing.

It’s amazing that a tech company can make me—me!—divide my self-worth into endless discrete moments and distribute them among people I’ve never met and on average don’t think are very smart.

Why don’t I just delete my accounts? Well, I agree I should. But I don’t want to. I mean, I want to, but I don’t want to. There are two activities you can participate in on social media, giving and seeking attention, and both feel very urgent. I look at Twitter because I want to know what’s happening, when it happens, what people are saying about it, and what people are saying about what I say about it. I grew up being told it was important to read the news—remember that guy in the Times who refused to read any news, at all, after Trump was elected? Don’t want to be like that! And out of some quixotic obligation to collectivity I still believe that knowing what is happening in the world is important, even if the consequences of that knowing are just better party conversation and an erased sense of not-knowing. The news happens on Twitter, too: politicians hoping to control their narratives follow the president’s lead and tweet their statements instead of giving them to reporters, and those of us on Twitter respond in a way you can’t trust reporters to faithfully transcribe—I want to see for myself. In addition to mourning celebrity deaths and maligning absurd presidential decrees and fretting about nuclear war, I also want to be there when they announce the experiment is finally over and we can all throw our phones out the window. It’s as easy to imagine the total collapse of Twitter as it is my own nervous breakdown (which I would probably tweet about).

I also came up during the golden age of reality television, which must have taught me how to find the filler of others’ days as fascinating as their sex lives, or rather how to relate the two—pretty rookie to figure out who’s sleeping with whom using social media—as well as how to avoid fixating too much on the boundary between real and fake. Part of the idea that Twitter is not “real” comes from the truth: it’s not an accurate reflection of society in total. But there’s nowhere else you can get such a large sample size, and so quickly; as long as you keep the context in mind there’s nowhere better to watch how people behave under certain conditions, particularly if you’re a writer. Human beings are ridiculous—sanctimonious, rude, two-dimensional, so obvious it’s shocking, like children—when they want to be watched, when they’re acting within the endless discrete present tenses that reward self-interest but not self-awareness or preservation.

It’s harder for a writer to get this material merely by “overhearing” it, and besides that’s a genre of Twitter thread—the shamelessly extended eavesdrop. In any case, there’s a sense among writers who are “extremely online” that we have to be on Twitter for work, and it’s not true—but it does help. Even obnoxious self-promotion tends to get you more freelance work—the websites are always half-empty—and more importantly to “log off” would mean losing step with much of the world. Look at the most notorious dissenter, Jonathan Franzen. His most recent novel, Purity, in which he attempted to address some of the issues of the internet, was not very good, because although he can identify the systemic problems, he refuses—to use a social-media word—to “engage” with the details of the system that would affect the characters of his novels. It’s an approach that would serve him better on Twitter. (That’s also irony.) Even writers who purport to “not do social media” will later reveal that they’ve started anonymous accounts, which allow them to look at it about as much as anyone else. Others who seem relatively inactive are often lurking. You can tell because they often casually mention they saw your recent tweets.

Posing into the Void

As for the matter of politics: when I post a political message on social media, I may be raising slight consciousness, but it depends on who and how many my followers are—most of mine are fellow writers and media professionals and unknown men who, I have to assume, given this was my intention in choosing it, have been at least somewhat seduced into reading my daily musings by my profile photo, which, not unlike when I was fourteen, depicts me as a woman with sex hair and a pouty mouth. My only hope of exposing my selfless socialist feminist beliefs to a wider audience is to go viral, which is more likely to happen if I am direct and dumbed down—do not screenshot this and mock it—which would, like a game of telephone, expose incomplete versions of my selfless socialist feminist beliefs to two main groups of people: those who will not investigate them further to learn more about them, and those who will hate them, will never be convinced of them, and are thus compelled to insult me, if not call for my death in more frightening terms than the retreating passive aggression of my friendly acquaintances/colleagues. But, of course, it’s foolish to lament the level of discourse on Twitter—what else would you expect?

Regardless of my political statement’s unquantifiable impact, I’m inevitably saying, “This is what I, an educated person, think. I’m not like that guy who doesn’t read the news!” The movement to abolish ICE was supplemented in no small part by the diligent tweets of one guy, but I am not that guy. Are we all supposed to be that guy? That’s sort of the message I get. I suspect the main thing for most people is that it (tweeting) makes them feel, briefly, like they’re helping, but beyond politics, it usually makes me feel worse. If people “engage” I can’t stop checking back to see who and how many; if they don’t, I feel like I’ve made some grave error of judgment that will soon trickle down into my professional and social prospects. My self-deprecating commentary—“nothing more embarrassing than being complimented on your Twitter thread”—never quite manages to ironize itself out of what it is: a plea for attention among infinite other pleas for attention. The “connection” we were promised is not so different from a broadcast: I make up a character and play it for ratings. It’s amazing that a tech company can make me—me!—divide my self-worth into endless discrete moments and distribute them among people I’ve never met and on average don’t think are very smart. I’m not being sarcastic: it really is amazing.

Does anyone’s ideal world have social media? Recently, a friend I met on Twitter, who has many more followers, and tweets much more often than I do, approached me at a bar and said, totally in earnest, “I’m glad to see you’ve been more online lately.” I was too stunned to reply with what I was thinking, which is that I am obviously a profoundly unhappy person who needs to delete her Twitter account and go to therapy. He’s not a “troll,” but I felt he was trying to lure me under the bridge. In reality, though, I was already there.