Nudging the Lexicon

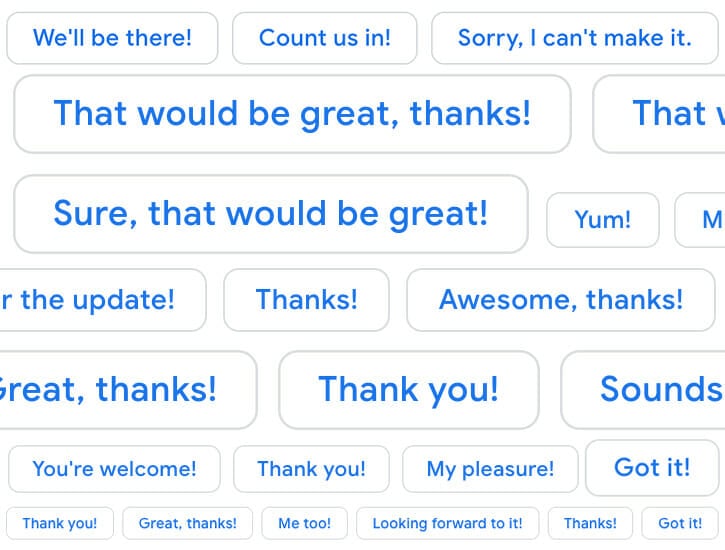

Gmail’s “Smart Reply” feature offers three options in a choose-your-own-adventure game at the bottom of received emails: “Got it.” “Got it, thanks!” and “Looks good!” are common choices. Sometimes the suggested responses are lightly ridiculous. An “I love you” email can prompt “It works!”—perhaps an overcorrection from an early bug when the algorithm was saying “I love you” unprompted all the time? But mostly the Smart Replies are bland formulations of convenient and functional corporate language. They confirm receipt, accept a proposed meeting time, or express general positivity!

The more email we produce, the more we beckon the arrival of a two-way interchange between human language and generated speech.

This is The New Gmail, which users could opt into as early as April, but which was rolled out to 1.4 billion active accounts this summer. Like most changes to the design of our daily use technology, The New Gmail began as an annoyance, one roundly condemned on Twitter, the internet’s ne plus ultra of usage and style. A few weeks later there was a subtle change: some people were copping to using it, or if not actually using it, then being surprised by the spot-on replies. “Not a technophobe, but I find myself refusing to use Gmail’s auto-replies even when they are exactly what I intended to write. I’m a writer, dammit!” tweeted Lane Greene, the language columnist for The Economist. In late September, The Wall Street Journal reported that 10 percent of all Gmail responses were being sent by Smart Reply.

The reply suggestions—which Google now allows users to turn off—are not the only major change to Gmail. There’s an even more demeaning feature: Smart Compose, or suggested-email-writing. If you leave the option on, you can see a ghost-text of what Gmail thinks you’re about to say and hit “tab” if that’s it. Type, “How” and the algorithm will recommend, “are you?” Little did it know that I intended to type, “will we continue to live in this Hades of aphasia and manufactured communication?” Like the suggested replies, the auto-compose feature is geared toward the professional: type “What did you discuss at the . . .” and it ad-libs “meeting.” And, like the replies, it’s polite, always seeking to add a salutary “thanks” after your commas.

Just as bad, there’s a feature called “Nudge” that reminds you of emails you’ve ignored, or, more painfully, emails written by you that have been ignored. With its time-based reanimation of digital content, it’s a distant cousin of Facebook’s nostalgia machine—three years ago on this day you became friends with so-and-so—but with more obvious “professional” usefulness. “Follow up?” it ask-demands, imploring you to generate more email traffic. Emails that once would have lain dead and buried in the dirt of your inbox now have a life of their own—and, really, ignore these nudges at your own peril.

Is there a reason to be so ill-tempered about these features that I’m not being forced to use, that are probably, on balance, convenient for people working in high-email-traffic office jobs? Yes, there is, thanks! Automated communication is not new, but it’s starting to get scarier and more efficient. The more email we produce, the more we beckon the arrival of an all-encompassing two-way interchange between human language and generated speech.

The algorithm is mimicking us, but now we’re also mimicking it. The algorithm—which I’m using as shorthand for a series of complicated machine-learning processes—has been absorbing human-email-speak by creeping through billions of perfunctorily worded emails—and it is now spitting them back at us. It’s a refraction, then, of how we write to each other online. But suggestions are also manipulations, as we might know from, say, Amazon’s effective monetization of RIYL logic. Yet these seemingly gentle intrusions into our digital lives are not so passive as they might appear.

It’s also about the automation of perception: these algorithms will gently manipulate—perhaps nudge—our lexicon.

In the case of digital advertising and marketing, the motivation behind these recommendations is glaringly obvious: buy this based on everything we know about you. It works. With Gmail, it’s a bit more diffuse, though no less craven. Google is running the rat race to develop automated communication and machine learning technologies that will have unspeakable monetary value in the coming decades. Alphabet chairman John Hennessy claimed in May that Google’s voice assistant system, Duplex, passed the Turing Test, the vaunted AI threshold for human-robot communications; one “tech expert” said he couldn’t distinguish between the voice of a human at a hair salon, and the robot, which had learned to say “Mmm-hmm.” So Gmail’s new email features, benignly annoying as they seem, are a long-term bid for monopoly and profit by way of accelerated automation.

But it’s not just about the scourge of technopoly, which is day-after-day confirming its deleterious effects. It’s also about the automation of perception: these algorithms will gently manipulate—perhaps nudge—our lexicon. Even those who don’t use Smart Reply will see them at the bottom of their emails. Empty phrases like “Got it, thanks!” will “occur” to us more often, which means we’re more likely to select from Gmail’s three shades of bleakly positive and corporate-readymade replies. “I think it’s perfect!” we might find ourselves saying, in response to a memo draft.

Gmail’s suggested replies and auto-compose features rely on communication by mental proxy. An email reading, “I’m hungry!” can prompt the response, “Yum!” This is outrageous, but it has a primitive relationship to how we think and speak. The function of these replies is to eliminate complexity, to pare communication down to dumbness, to “acknowledge” or “affirm” without saying much of anything. How do we feel about the degeneration of language at the hands of monopolies? Looks good!