The Zuckerberg Follies

In the introduction to his new book, Antisocial Media, Siva Vaidhyanathan writes that there are only two things wrong with Facebook: “how it works and how people use it.”

But within that wry formulation, Vaidhyanathan finds many layers of criticisms—about the way Facebook sucks people in and about the passive way people get sucked in; about the way Facebook violates its users’ privacy and the way users don’t collectively push for effective government regulation; about the way the company’s global reach makes it more than just an American phenomenon and the way our national media analyzes it mostly in terms of how it functions in this country.

And Vaidhyanathan asks questions almost never taken seriously in the coverage of the technology world. What is this giant company doing to us as democratic citizens? “Nothing about Facebook, the most important and pervasive element of the global media ecosystem in the first few decades of this millennium,” he writes, “enhances the practice of deliberation.” Thus, the book’s subtitle: How Facebook Disconnects Us and Undermines Democracy.

This is Vaidhyanathan’s fifth book. But this one turned out to give him an unusually harrowing few weeks this spring. He was done writing and the manuscript was in the hands of his publisher. And then his story blew up when the New York Times and The Observer of London reported in March on how a Trump-connected company, Cambridge Analytica, ended up with Facebook data on fifty million users—later said to be eight-five million, or more. Suddenly the Facebook “breach” was in worldwide headlines, and Facebook CEO Mark Zuckerberg was being hounded into an appearance before Congress, and the book that Vaidhyanathan had been working on for two years was not scheduled to be published until this September.

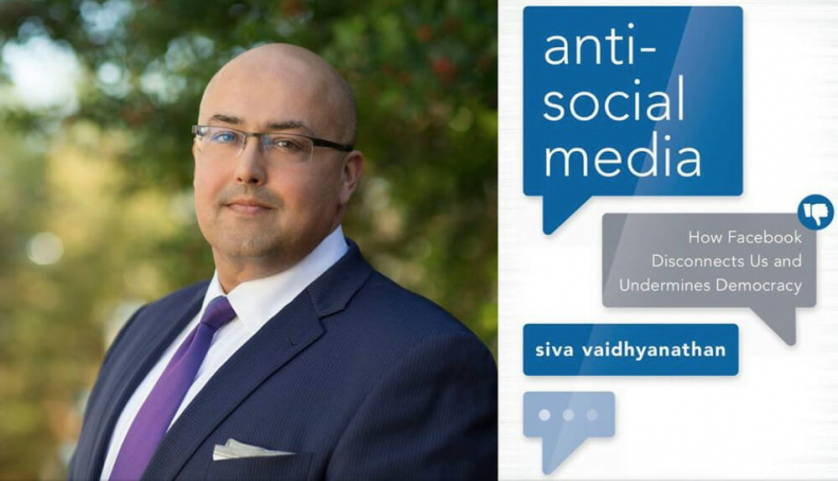

Oxford Press scrambled, and moved the publication date to June 15. The book is now finding its way into readers’ hands. I spoke with Vaidhyanathan in May by phone. He is a professor in media studies at the University of Virginia, where he also directs the Center for Media and Citizenship. (Disclosure: he has also written for The Baffler and is currently a member of the board of The Baffler Foundation.) He told me about his winding path to a career as a media scholar. After he studied history at the University of Texas and then continued at UT to get a PhD in American Studies, he found himself teaching at New York University, where he was a colleague and friend of the late Neil Postman, an acerbic critic of the role of media in American culture. Vaidhyanathan had specialized in literary and intellectual culture at the turn of the twentieth century. But as the internet became a new force in the 1990s, he began to see some of the same concerns about monopoly, culture, and democracy at the turn of the twenty-first century.

He published his first book in 2001, Copyrights and Copywrongs: The Rise of Intellectual Property and How It Threatens Creativity, and since then has become one of the leading media scholars writing in the Postman tradition, a lucid, engaging critic who recognizes how intricate the relationship is between media and democracy. This is a transcript of our conversation, edited for length.

Dave Denison: You wrote in March that any campaign to get people to “delete Facebook” is going to be ineffective. Why?

Siva Vaidhyanathan: Largely because Facebook is valuable to too many people. The campaign to delete Facebook was one of wealthy, connected Twitter-savvy North Americans. It’s absurd to imagine that people in the Philippines or in Brazil or in India were going to join this campaign. That renders it politically ineffective. But it also renders it counterproductive. By removing yourself from Facebook, you remove yourself from the concern. If you are active on Facebook and you watch how people relate to each other and how it affects you, you can be sensitive to the larger condition. And if you want to participate in a large movement to rein in Facebook, to regulate Facebook, or to break up Facebook, it’s necessary to understand Facebook.

DD: At the same time, I know plenty of people who feel a great sense of relief in just getting away from it. One person recently told me, with apparent satisfaction, “I’ve been Facebook-sober for almost a year now.”

To be political is to be engaged, to have a stake. To remove yourself from having a stake is to be apolitical.

SV: And you know what? I applaud that. I think if something is bad for you personally, by all means, get away from it. But Facebook brings value and satisfaction to more than two billion people. They’re not all fools. They’re not all dupes, although they often are addicted—or “habituated” is a better word, right? However, I take breaks from Facebook, as well. I take thirty-day, sixty-day breaks. To get steady, to pull back from the noise and the turmoil. Often to finish a writing project. But no one should pretend that those are political acts. And this is my larger point here. To be political is to be engaged, to have a stake. To remove yourself from having a stake is to be apolitical. Facebook demands political responses. And these—I hate to even use the word boycotts—these soft, individualistic removals, or recusals, are not boycotts. They’re not politically organized; they’re not intended to deny a powerful force something valuable in a systematic way, they don’t really put pressure on anybody who matters. They’re simply self-satisfying moves. They’re acts of moral indignation and not relevant political actions.

DD: Did anything important come out of that appearance by Mark Zuckerberg in front of Congress?

SV: I think so. Nothing tangible emerged from Zuckerberg’s testimony, nothing actionable.

But I think there was a clear distillation, or a clear realization, of two things. One: Mark Zuckerberg is a deeply sincere and concerned person. He is not duplicitous; he is not manipulative. He’s naïve. And he’s uneducated. And that was really clear. His sense that we could solve all problems through the magic of artificial intelligence? That needed to be said in that public forum, so he could be exposed for his naivete. That was an important move. It was also really important for people to hear his mantras. To hear the mantras of artificial intelligence, to hear the mantras about Facebook meaning well, being responsible, trying to connect the world, trying to improve the world. All of that is fundamentally nonsense. But Zuckerberg truly believes that stuff—that by connecting human beings we will all start behaving better. Thousands of years of human history to the contrary, he thinks that the mere act of connection contains some positive force, without anything else being present. Without ideological change, without spiritual change, without a sense of flesh-and-blood recognition. He has this bizarre idea that the Facebook profile will make some sort of positive difference in the world. I mean, it’s absurd, when you think about it.

DD: But I think a lot of people have trouble seeing him as well meaning, and I wonder sometimes how many of us think of Zuckerberg as repellent because of Jesse Eisenberg’s portrayal of him in The Social Network.

SV: I often tell my students that The Social Network is like a cartoon. The Zuckerberg character in The Social Network is not Mark Zuckerberg. Jesse Eisenberg’s portrayal of that character is flat. [The character] is motivated merely by resentment and envy—and that’s just not Mark Zuckerberg. Mark Zuckerberg is an evangelist. He is deeply committed and has always been deeply committed to an ideology of global uplift through digital connectivity. He’s never deviated from this position. He has seen his members, or his Facebook users, instrumentally, right? So he has never seen us as full human beings. He sees us as producers of data. But he wants us to be happy, the way that someone might want a cage full of gerbils to be happy. The problem is, his sense of what is best for us may not be our sense of what is best for us. And, he has never come to the conclusion that we gerbils can be really nasty to each other. That seems to have escaped him. The potential for human cruelty never seems to have factored into his vision of what he is producing.

If someone’s going to use “community” as the core goal of a multi-billion-dollar company, maybe a couple of days of reading would help.

And he continues to use words like “community,” a word he does not understand in the least. This is what I mean when I say he’s uneducated. Because he keeps using terms like community, which are complex, fraught concepts, and he doesn’t stop to think what that means. He doesn’t stop to think about the dynamics of a community, the effects of a community, the limitations of a community, and even the very definition of a community. You know, if someone’s going to use community as the core goal of a multi-billion-dollar company, maybe a couple of days of reading would help. He’s really acting on this core faith that he developed in his teens as a member of sort of a quasi-hacker community, and he has decided that that vision of the world is indisputable. He’s now facing, maybe, a crisis of faith. Because he’s having to concede that it’s not all as utopian as he had imagined. But it’s too late, because he built this thing, and now 2.2 billion people are using it and are not in the mood to stop using it, and have been using it toward really bad ends. What do you do? The thing he built is now too big to govern.

DD: Getting back to Congress, I like the anti-trust idea. Wouldn’t it help just to break the company up?

SV: It would help to break the company up. It wouldn’t completely solve every problem, but it would go a long way to minimizing its power and influence. Retrospectively, we never should have allowed Facebook to buy Instagram. And we never should have allowed Facebook to buy WhatsApp. These are two competitive businesses. These are two very successful social-media systems that are now Facebook products, and Facebook shares all the data of all the users of Instagram and WhatsApp.

It would also be great to break off Facebook’s virtual reality project, which is called Oculus Rift, because virtual reality has the potential to be a rich social medium, as well. It has the potential for therapeutic applications. So in that field, we should want multiple companies experimenting, [and] strong regulation upfront, to make sure the powers of virtual reality are not abused. And the last thing we should want is for a data-sucking company like Facebook to be monitoring everything we do in virtual reality. Because there are going to be people who are going to want to use virtual reality for medical and psychological treatments. There are going to be people who are going to use virtual reality for sex. And we’re going to have to have very strict, very carefully thought-out regulations on how companies will allow virtual reality and how companies will monitor our use of it and our data. We cannot have that mixed in with all the other data that Facebook has about us. That’s just way too dangerous.

DD: I imagine as you talk to people about your book there will be one question that is always going to come up from ordinary users, along the lines of people saying, “So what if my data is shared with advertisers. Why does that hurt me? Why does that hurt people?” A lot of people probably don’t hate targeted ads, and maybe are not motivated to care that much about data protection. I mean, if they look at it in terms of “O.K., I was shopping for shoes, and now I’m seeing shoe ads.” Why is that bad?

SV: It’s very important to think about data protection and privacy as an environmental issue and not an individual issue. It’s important to remember that while you might not be vulnerable, because of your position in life, others are. It’s not just Google and not just Facebook that hoard this data. It is other companies that profile us and that gather this data. And we don’t know where it ends. In the 2012 campaign, the Barack Obama campaign managed to scoop up Facebook data on every American. It had better, richer, more comprehensive data than Cambridge Analytica did. So we’re talking about the head of state—the head of state’s political arm had intimate records of our connections, our affiliations, our opinions. If you care at all about civil liberties, if you care about the act of profiling based on religion or ethnicity or affiliation, you should care that the head of state had access to all this data. Now, we’re lucky that it was of all people Barack Obama, and there seemed to be some kind of firewall between the campaign and the government. What if it’s not?

DD: And I’ve seen the Obama people say, “yeah, we did that, but we did that legitimately, not like Cambridge Analytica.” What’s the essential difference between the way the Obama campaign used Facebook and the way the Trump campaign used Facebook?

SV: I’m not sure what they mean by “legitimate.” Look. The difference is—almost nothing. The difference is that the Obama campaign worked for the president of the United States, a person who has the power to kill people. So it’s actually much worse. But Cambridge Analytica doesn’t actually do anything. It’s a joke company, with no record of success or power. It’s affiliated with terrible people. We’re lucky that Barack Obama wasn’t interested in using this data to round up people of a particular religion or ethnicity or political persuasion, and put them in camps. Around the world, Cambridge Analytica works for people who are willing to do that. They worked for [Uhuru] Kenyatta in Kenya. Cambridge Analytica worked for all sorts of nasty authoritarian nationalists around the world. What happens when Facebook data about millions of people in Kenya ends up in the hands of a political consultant who is working for an authoritarian nationalist political force in Kenya? Then things get bad. That’s the big problem.

DD: Are the two campaigns, the Democrats and the Republicans, in 2020 going to be able to tap into this data successfully the way that Obama and Trump did?

SV: Obama and Trump used Facebook very differently. By the time Trump came along, Facebook was no longer letting campaigns have this data. Facebook decided to take it all in-house, and do it all for them. This is why I don’t think Cambridge Analytica mattered at all to the Trump campaign. Trump didn’t need Cambridge Analytica to profile American voters—Facebook did it for him. What happened in 2015 was that Facebook decided “we will no longer share the data outside of Facebook, we’re going to hoard it for ourselves and we’re going to create our own political consulting business.” That’s what it did. So in 2016, Facebook embedded Facebook employees with major campaigns around the world. It embedded people in the campaign of Narendra Modi in India. In early 2016, Facebook embedded people in the campaign of Rodrigo Duterte in the Philippines. In mid- to late-2016, Facebook embedded its people in the campaigns of Donald Trump and Hillary Clinton. Difference was, Hillary Clinton’s campaign was run by political professionals who thought they knew everything. They relied on conventional wisdom, all of the data sets that the Democratic National Committee and the Obama campaign had given them. And they had their own independent software platform that was created to track voter sentiment and motivate people. That’s what people who went door to door used. But they didn’t do much on Facebook; they didn’t buy many ads on Facebook. The Clinton campaign bought ads on television and radio, the old fashioned way, the expensive way—because they had all the money!

Trump didn’t need Cambridge Analytica to profile American voters—Facebook did it for him.

What Trump’s campaign did was, they brought on the guy who had run the digital marketing project for the Trump organization, and he just said, well I know how to do marketing, and I know how to do Facebook marketing, so we’re going all Facebook. It’s cheap, it’s dependable, we are going to use Facebook to clearly target segments of the electorate that might cause trouble. They focused on Pennsylvania, on Wisconsin, on Michigan, on Florida, and a few others. And they simultaneously targeted ads at a small segment of the electorate that expressed interest in a particular issue, to motivate them to vote. So if at some point you had expressed strong support of the NRA and gun rights and you lived in Florida, you were getting a lot of ads for Trump tagged to your primary motivation—which Facebook knows. So the Trump campaign could run thousands of different ads in Florida alone, and surgically motivate small groups of voters. In addition, Trump made sure to spread ads to select groups of Clinton supporters to deflate their enthusiasm. They targeted ads to men of Haitian descent, reminding them of how much Bill Clinton botched the Haiti relief effort just a few years ago, after the big earthquake. And targeted ads at African American voters reminding them of Bill Clinton’s crime bill. And they targeted ads in Michigan and Wisconsin, reminding people that Hillary Clinton was taking [them] for granted. Hillary Clinton didn’t go to Flint for months. It was luck in addition to skill, but Trump was able to move a hundred thousand votes across Wisconsin, Michigan, and Pennsylvania—that’s what decided the election.

DD: But back to 2020, do we know yet how much of this is going to be effective in the next presidential campaign?

SV: I don’t think we know, but I think we know that a tremendous amount of money will go to Facebook. That’s all we can predict. We do know that Facebook now wants to enforce some sort of transparency, so we can hopefully trace who bought what ad. It remains to be seen whether that will be effective, especially in the absence of federal regulation setting such standards and creating enforcement. There’s no one to punish Facebook if Facebook fails. Facebook’s trying to head off regulation by doing this, this transparency effort. But ultimately, transparency doesn’t matter. The same practices will occur. Facebook is committed to being a factor in global politics. And it not only wants to make money off it, more importantly, it wants to matter. Facebook wants to be the place where we conduct our politics. And that is something that should concern us.

DD: You wrote quite interestingly in the opening of your book about coming in to meet, for the first time, Neil Postman. I finally got around to reading Postman’s Amusing Ourselves to Death last year. He was writing in 1985 and so his emphasis then was on television. You read it now and you really wonder how his argument has to be altered by understanding the internet, which is not like television in a lot of important ways.

SV: As I wrote in that introduction, Neil Postman and I were friends, I considered him something of a mentor. But I rarely agreed with him back when I knew him and worked with him. The essence of our relationship was friendly disagreement about many things—not least of which was that he was a Mets fan and I was a Yankees fan. But over time, I’ve come to see that his habit of mind when it came to media was really valuable. So when I re-read Amusing Ourselves to Death I don’t walk away saying “He nailed it.” I walk away saying he has offered some important tools and a language through which we can understand a long trend in American media, and in fact global media. A trend toward immediate gratification. While there are so many structural differences between how we engage with digital content and how we engage with television, those things are converging and the one consistent phenomenon across all of that is immediate gratification. The ethic of convenience and immediacy is so overwhelming. Why do we like Amazon? Because we type a few buttons, we click, and it shows up at our door the next day. If it’s a book, an ebook, it shows up on our devices instantly. The convenience is what has structured our habits.

DD: But also, like Marshall McLuhan, he insisted that we need to understand what is essential to each kind of medium. And he was talking about what was essential to television, which is entertainment. Facebook alone is so many things to so many people that it seems hard to pinpoint what is essential to Facebook.

SV: One of his insights into television was that it had become what he called a “meta-medium.” Postman defined a meta-medium as a medium that contains and structures and defines all other media, all previous media. He made a convincing argument that the emergence of television changed radio. Facebook is a meta-medium, as well. The experience of being on Facebook is the experience of encountering video that resembles television, it also means you encounter text, it means you encounter photographs, poetry occassionally. It is a site, though not the only site, for encountering this variety of media and expression. It performs similar roles to television in that sense, but it is a very different experience than television. It’s important, as Postman guides us, to pay attention to the specific nature of Facebook. In chapter one of my book I do an almost phenomenological dive into the experience of using Facebook. What does it mean to watch that flow of your news feed scroll up. What are the items you see? What does that say about you and your world? What is the feeling you get, as you do that? My opposition to Postman at the time, between 1999 and 2002, was based on a vision of digital media that seemed to have the promise of openness, of democracy, and of depth. If you remember back in those days—maybe I wasn’t so far from Mark Zuckerberg in this—I saw the rise of blogs, independently produced websites, of communally edited platforms, as being extremely exciting and potentially empowering. I shared that optimism in the early days of this century that we could build digital systems that were going to render Postman’s arguments irrelevant, or quaint, or dated. Turns out that Facebook basically revived the importance of Neil Postman’s work.

DD: I think so, too. Getting back to that key point that he insisted on, what is essential to this particular type of media? You know that Mark Zuckerberg would put it in a phrase, as the [Facebook] mission statement says, “To give people the power to build community and bring the world closer together.” If you are trying to answer him, to what really is essential—just looking at your table of contents, “The Pleasure Machine,” “The Surveillance Machine,”—you’re talking about addiction, surveillance, attention, sometimes benevolence, sometimes protest, sometimes disinformation. All of those, but it’s hard then to say what is the truly essential part of this media.

SV: I don’t know that there is an essential part, except this obsession with connectivity. And the paternalistic structuring of our relationships. Everything about Facebook is paternalistic. Everything about Facebook treats us like hamsters. The very language that Zuckerberg chooses, that he is caring for us and that he’s empowering us—like we need him to do that for us. Come on. The essence is this obsession with bringing more people into the platform, at all costs, and inviting them to connect to as many people as possible. Giving them scores. How many people liked your post. How many friends you have. All of this is numerically obvious to you when you use Facebook. That gamification of Facebook prompts us to reward Facebook for rewarding us. That’s the habituating nature of it, right?

DD: Nowhere—I don’t think—has he ever said, or has any serious person ever said, that a key function of this is to improve democracy and deliberation.

The very language that Zuckerberg chooses, that he is caring for us and that he’s empowering us—like we need him to do that for us. Come on.

SV: Absolutely not. He has hinted that he thinks politics will improve if we get to know each other better. After the revolutions in Tunisia and Egypt, Zuckerberg was very careful not to strut and declare victory, but in his letter to investors a few months after those uprisings, he very clearly hinted at the fact that his platform could be used to help spread democracy. He never wanted to take credit. To his credit, he did not take credit. And unfortunately a bunch of very shallow thinkers decided to give Facebook and Twitter credit for what actual human beings did by putting their lives on the line in the square, facing down guns. But he was willing to declare in that letter that Facebook had the potential to spread democracy. And it wasn’t that long ago when a lot of us actually thought—I was a cynic about it—but a lot of us actually thought, wow, you give people the power to connect and the power to organize, and you spread information outside of the state, and good things are going to happen. It just never occurred to a lot of people that ISIS was looking at those same tools, saying wow this could really work for us.

DD: I liked what you said somewhere that maybe the highest dream we could have for Facebook is that we would not expect any of those social benefits and have it decreased in importance so that it primarily exists to keep us in touch with what our cute little nieces and nephews are doing and who’s got a dog and who’s got a cat.

SV: Right, so family and friend relations are the reason we all signed up. To get pictures of our cousins’ kids graduating from high school. And to see baby pictures and puppy pictures. And Instagram does that for us. That’s one of the reasons Instagram is actually a wonderful platform. No one can share anything in a sense – you can’t amplify what other people post. It’s a really hard platform to use for propaganda. It has been tried. Propaganda occasionally appears, but it’s just really hard to be effective, because it doesn’t have those sharing dynamics and those scoring dynamics. But Instagram does what we wish Facebook did for us, what we originally signed up for Facebook to do. The problem is that Zuckerberg’s vision for Facebook was to be the operating system of our lives. To be the totality of our awareness of our interactions with our fellow humans. And that ambition opened it up to amplify all of our human frailties and faults. And that’s what has made Facebook a terrible thing. Had Facebook stayed more like Instagram, it would not be nearly as wealthy or influential but, man, it would be a lot more fun, and better for us. I wish Instagram were independent, I wish we could break it off, and watch as people spend more time on Instagram and less time on Facebook, and watch as Instagram competes with Facebook. Because right now Instagram cannot compete with Facebook, because Facebook will not let it.

DD: So really your bottom line is to get people to the consciousness where they’re willing to say to Mark Zuckerberg “We are not your gerbils.”

SV: Yes, that would be lovely! I really want us to act as citizens, as global citizens. I want us to be concerned about how Facebook has structured politics in the Philippines and Myanmar. About the ways habitual use of Facebook has coarsened us. I want us to be concerned about the fact that Facebook has crowded out other possible media and platforms that might enhance deliberation.

DD: And that it is, as you said, a distribution system for propaganda.

SV: Absolutely. If we can engage with Facebook as citizens and not as Facebook users or consumers, and we rediscover our power to motivate our legislators to pay attention to our needs, we have a shot. Nothing about that will be easy, or quick. But it is our only power. We do have power as citizens. We do have the ability to command our legislators to pay attention even if we ultimately can’t dictate the results. With slow steady concerted pressure, we have a chance.