Breaking: Moguls Fear AI Apocalypse

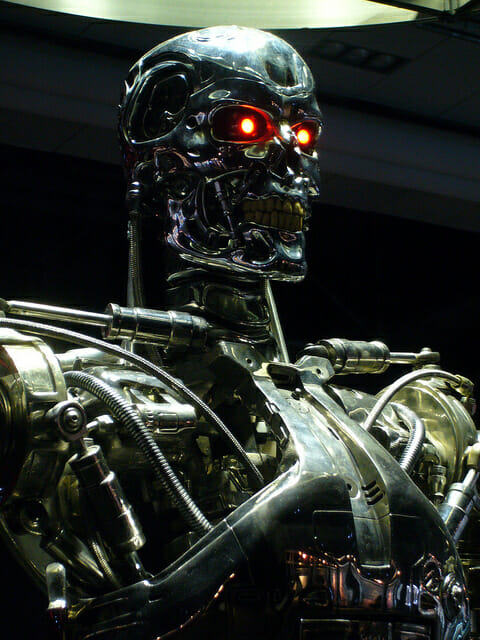

A funny thing happened on the way to the Singularity. In the past few months, some of the tech industry’s most prominent figures (Elon Musk, Bill Gates), as well as at least one associated guru (Stephen Hawking), have publicly worried about the consequences of out-of-control artificial intelligence. They fear nothing less than the annihilation of humanity. Heady stuff, dude.

These pronouncements come meme-ready—apocalyptic, pithy, trading on familiar Skynet references—grade-A ore for the viral mill. The bearers of these messages seem utterly serious, evincing not an inkling of skepticism. “I think we should be very careful about artificial intelligence,” Elon Musk said. “If I had to guess at what our biggest existential threat is, it’s probably that.”

“The development of full artificial intelligence could spell the end of the human race,” said Stephen Hawking, whose speech happens to be aided by a comparatively primitive artificial intelligence.

Gates recently completed the troika, sounding a more circumspect, but still troubled, position. During a Reddit AMA, he wrote: “I agree with Elon Musk and some others on this and don’t understand why some people are not concerned.”

It’s easy to see why these men expressed these fears. For one thing, someone asked them. This is no small distinction. Most people are not, in their daily lives, asked whether they think super-smart computers are going to take over the world and end humanity as we know it. And if they are asked, the questioner is usually not rapt with attention, lingering on every word as if it were gospel.

This may sound pedantic, but the point is that it’s pretty fucking flattering—to one’s ego, to every nerd fantasy one has ever pondered about the end of days—to be asked these questions, knowing that the answer will be immediately converted (perhaps, by a machine!) into headlines shared all over the world. Musk, a particularly skilled player of media hype for vaporous ideas like his Hyperloop, must have been aware of these conditions when he took up the question at an MIT student event in October.

Another reason Silicon Valley has begun spinning up its doomsday machine is that the tech industry, despite its agnostic leanings, has long searched for a kind of theological mantle that it can drape over itself. Hence the popularity of Arthur C. Clarke’s maxim: “Any sufficiently advanced technology is indistinguishable from magic.” Any sufficiently advanced religion needs its eschatological prophecies, and the fear of AI is fundamentally a self-serving one. It implies that the industry’s visionaries might create something so advanced that even they might not be able to control it. It places them at the center of the mechanical universe, where their invention—not God’s, not ExxonMobil’s—threatens the human species.

But AI is also seen as a risk worth taking. Rollo Carpenter, the creator of Cleverbot, an app that learns from its conversations with human beings, told the BBC, “I believe we will remain in charge of the technology for a decently long time and the potential of it to solve many of the world problems will be realised.”

There’s a clever justification embedded in here, the notion that we have to clear the runway for technologies that might solve our problems, but that might also, Icarus-like, become too bold, and lead to disaster. Carpenter’s remarks are, like all of the other ones shared here, conveniently devoid of any concerns about what technologies of automation are already doing to people and economic structures now. For that’s really the fear here, albeit in a far amplified form: that machines will develop capabilities, including a sense of self-direction, that render human beings useless. We will become superfluous machines—which is the same thing as being dead.

For many participants in today’s technologized marketplace, though, this is already the case. They have been replaced by object-character recognition software, which can read documents faster than they can; or by a warehouse robot, which can carry more packages; or by an Uber driver, who doesn’t need a dispatcher and will soon be replaced by a more efficient model—that is, a self-driving car. The people who find themselves here, among the disrupted, have been cast aside by the same forces of technological change that people like Gates and Musk treat as immutable.

Of course, if you really worry about what a business school professor might call AI’s “negative externalities,” then there all kinds of things you can do—like industry conclaves, mitigation studies, campaigns to open-source and regulate AI technologies. But then you might risk deducing that many of the concerns we express regarding AI—a lack of control, environmental devastation, a mindless growth for the sake of growth, the rending of social and cultural fabric in service of a disinterested higher authority ravenous for ever-more information and power—are currently happening.

Take a look out the window at Miami’s flooded downtown, the e-waste landfills of Ghana, or the fetid dormitories of Foxconn. To misappropriate the prophecy of another technological sage: the post-human dystopia is already here; it’s just not evenly distributed yet.